Open-Source Microscopy

This is a summary of some of the open-source hardware pojects we would like share. We aim to break the inverse relationship between price and resolution. Feel free to contact us if you have cool ideas!

The list will be updated regularily.

Find more in our Nanoimaging Webpage

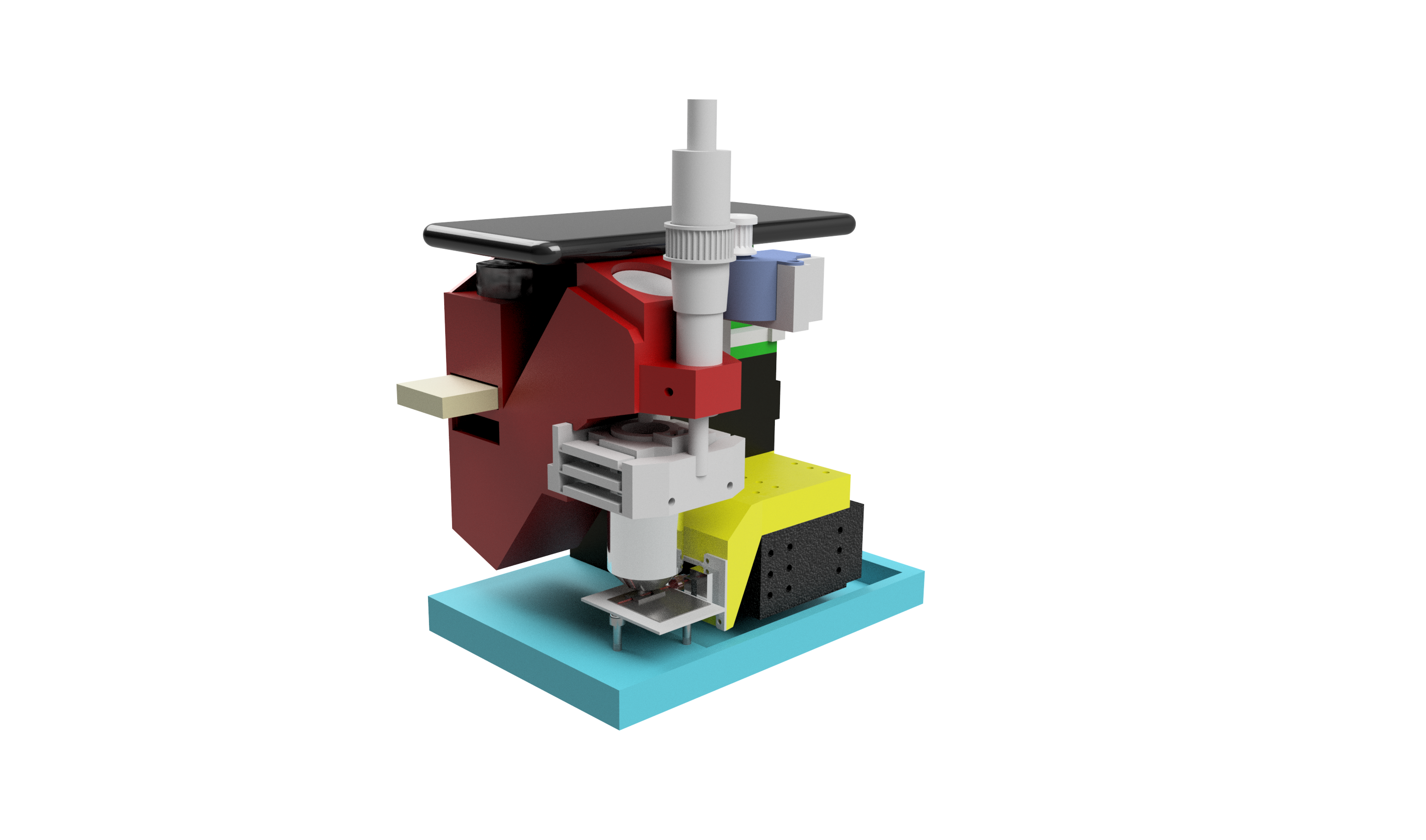

cellSTORM 2

A low-cost super-resolution imaging device based on a cellphone camera and photonic waveguide chips

Preprint

The preprint for the cellSTORM II device accompanied with a series of applications ca be found on BIORXIV 😊

cellSTORM II

The compact device features:

- autofocus

- automatic coupling mechanism

- on-device superresolution imaging

- survives cell incubators for several days

- performs autonomous imaging over several days

- dSTORM with <100 nm optical resolution

- costs <1000€ (for single-wavelength imaging)

- optical resolution down to 100nm

- multiple wavelength can be used (sequentially)

- using photonic waveguide chips TIRF is possible

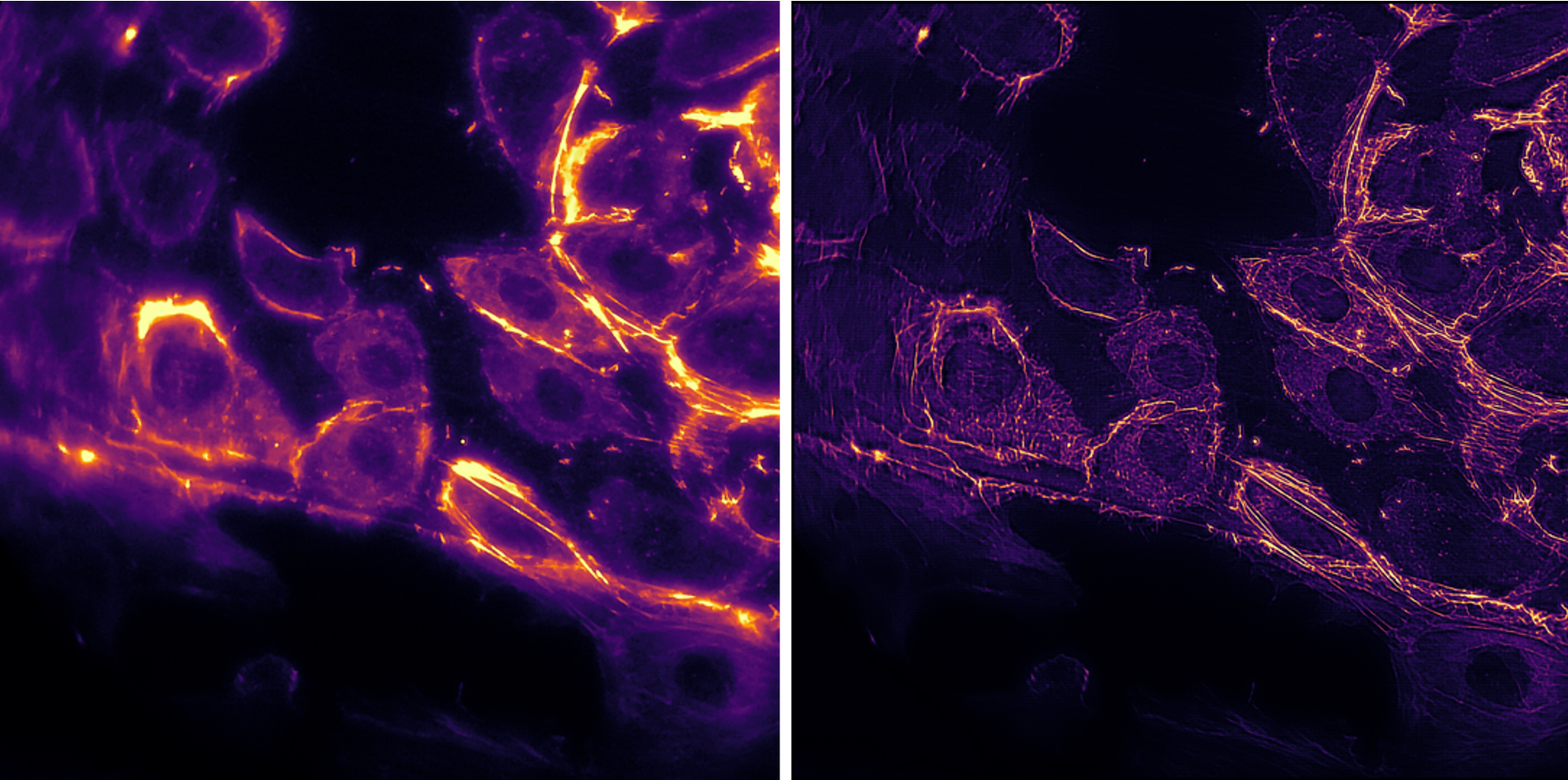

Super-Resolution using Fluctuating Intensity (SRRF, ESI, SOFI, etc..)

Using SRRF from the Henrique lab it’s possible to quickly increase the resolution even without complicated STORM protocols:

The image of actin labelled HUVEC cells is acquired using a 60x, 0.85NA objective lens. While moving the coupling lens, the varying intensity pattern caused by a changing mode field pattern can be used to increase the lateral resolution of fluorescently labeled samples at low excitation power. This is suitable for live-cell imaging.

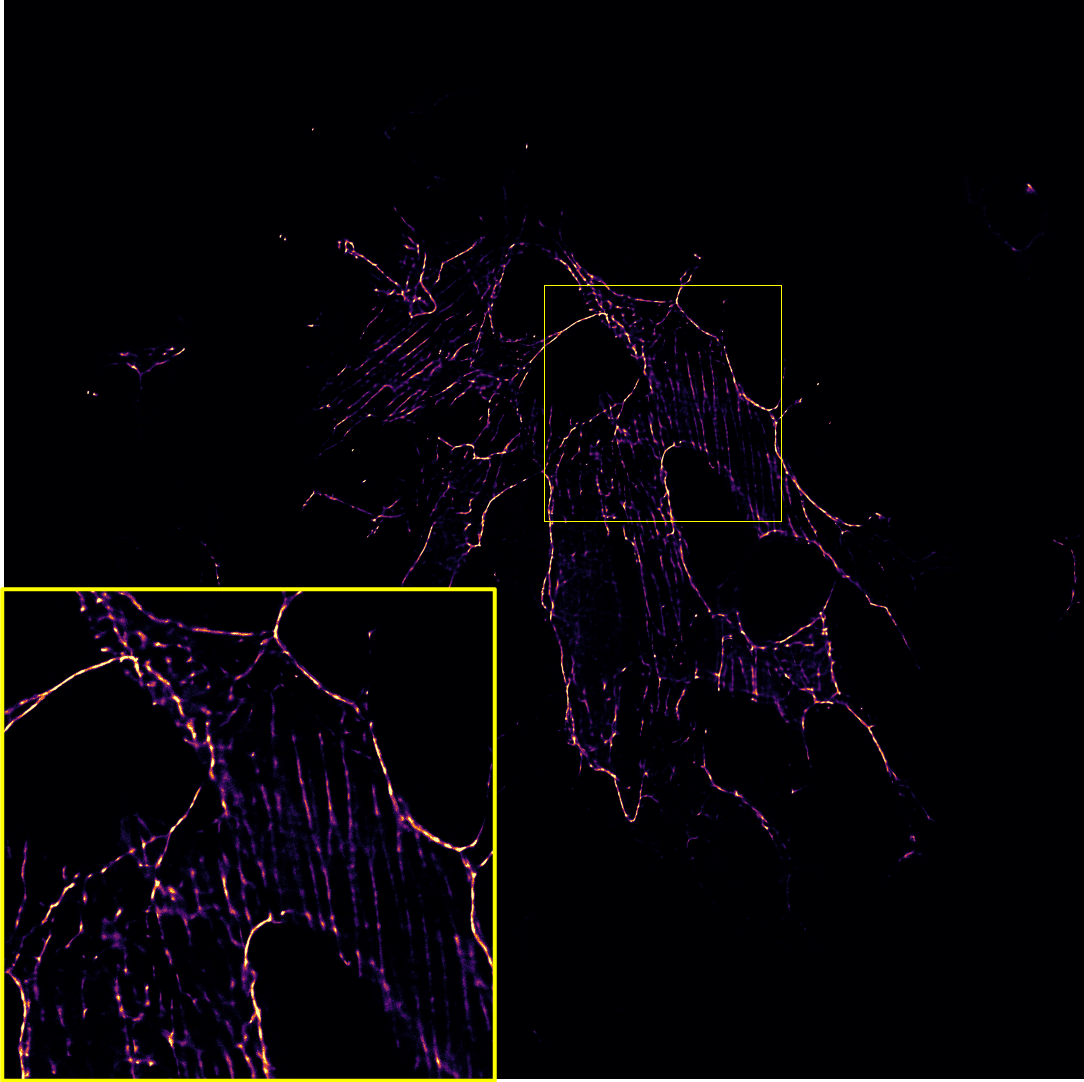

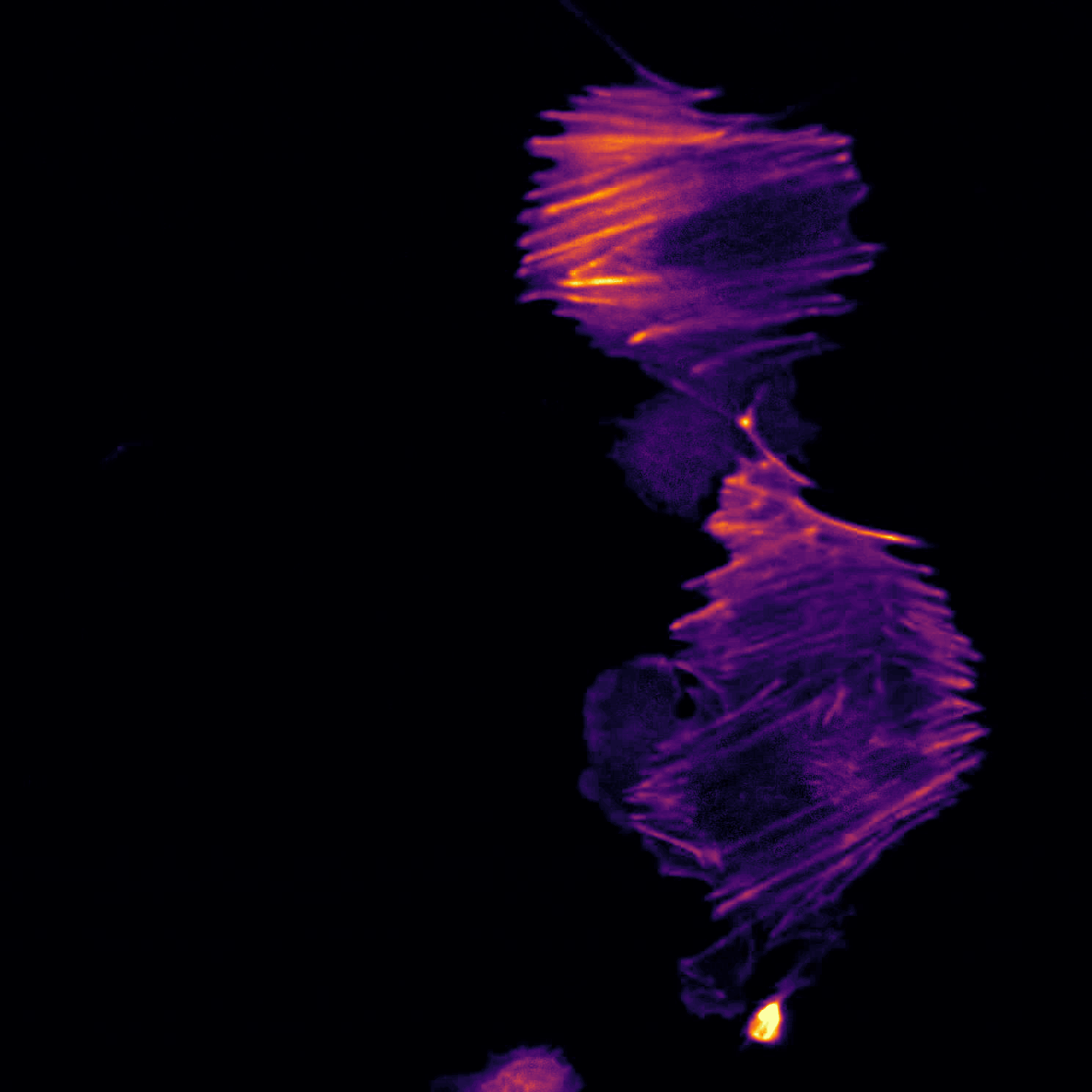

Super-Resolution using SMLM (dSTORM)

With high enough coupling efficiency and laser intensity, the setup enables super-resolution with a final resolution <100nm on a large field of view (FOV). This is well suited not only for educational purposes, but also for research outside the ordinary research and optics labs.

cellstorm_dstorm_hela_60x.png

Hardware

CAD Designs

If you want to replicate the device, you can find a detailed description with all necessary parts to order in this repsitory:

Assembly Tutorials

We now also have a pictures tutorial with a step-by-step guide on how to build the cellSTORM microscope here

Electronics and Code

To move the lenses or control the Laser intensity, we relied on Espressife EPS32s. The drawings for the electronic connections as well as the code to control them wirelessly using MQTT can be found here:

Bill of Material (BOM)

Along with the 3D printed parts in the Github-repository, you need a set of mechanical, electrical and optical parts summarized in the BOM below:

Dead Link?: Ebay is not really a reliable source since resellers may discontinue the product. In case you’re looking for a component which can not be found, file an issue here - alternatively: Copy the name under “Details” and search for it in Ebay. (E.g. “3450 300mW 637nm Dot Laser Module TTL/analog 12VDC” site:laserlands.net) Good luck 🍀

Amazon is more reliable, but personally we try to avoid ordering there (Sorry..). We try to update the list with Amazon links

| Type | Details | Price | Link |

|---|---|---|---|

| Laser 635/637 nm | 3450 300mW 637nm Dot Laser Module TTL/analog 12VDC | 50 € | Laserlands |

| Objective Lens | BRESSER DIN-Objektiv 60x, NA 0.85, 160/0.17 | 45 € | Ebay |

| 2x Mirror | PF10-03-P01 Ø1” Protected Silver Mirror | 50 € | Thorlabs |

| 2x XY-Stages | XY Axis Manual Trimming Platform Linear Stage Tuning Sliding Table 40/50/60/90mm, 60x60mm | 80 € | Ebay |

| Longpass (640) | Chroma 675/50 well suitable for Alexa Fluor 647 | 200-350 € | Chroma |

| Ocular | MIKROSKOP OKULAR PAAR PERIPLAN H 10 X LEITZ WETZLAR GERMANY | 10-90 € | Wie-Tec (Ebay is cheaper, 10x matters!) |

| ESP32 | ESP32 ESP32S WLAN Dev Kit Board Development Bluetooth Wifi WROOM32 NodeMCU | 7 € | Amazon |

| LED Buk driver | 3x SPARKFUN ELECTRONICS INC. COM-13705 | 18 € | Spparkfun |

| Wires | Various | 10 € | Amazon |

| Powersupply | 5V, 3A, Various | 10 € | Amazon |

| Raspberry Pi + SD + Powersupply + Housing | Raspberry Pi 3 Set /Bundle: 16GB SD-Karte, HDMI, original Netzteil und Gehäuse | 70 € | Amazon |

| Optical Pickup | Objektiv Optik Laser KES-400A PLAYSTATION 3 Nicht Funktioniert für Ersatzteil (HINT: Look for KES-400A replacement parts @ Ebay; Sometimes you get 10 pieces for 10€) | 1 € | ebay |

| PLA filament | Prusament PLA Prusa Galaxy Black 1kg | 25,00 € | Prusa |

| Cellphone+Camera | Huawei P20 Pro 128GB 6GB RAM Single Sim Twilight, TOP Zustand | 300 € | Amazon |

| Ball Magents | T::A Kugelmagnete 5 6 10 mm N45 Neodym Magnete NdFeB Menge wählbar extrem stark | 10 € | Amazon |

| Screws | M3 DIN912 Hex-Key Screws, HINT: screws need to be ferro-magnetic (i.e. galvanized!), M3 DIN912 at various lenght may work; we usually have tons of them from the @openUC2-Project | 10 € | Amazon |

| Micrometer Screw | RS Electronic 0,1 mm resolution | 40 € | RS Electronics |

ATTENTION: In case any parts are missing, please file an issue or contact us! We are happy to help out and to improve 😊

Software

As the software we relied on three different APPs for recent Android phones (in our case Huawei P20 Pro).

Briefly summarized and in-detail described below:

| Name | Purpose | Source |

| STORM-Controler | Remote control (MQTT) to control things like laser intensity, coupling lens, focus | APP: STORM-Controler |

| STORM-Imager | Control long-term image acquisition by enabling auto-focus and auto-coupling, schedule fluctuating intensity measurements inside incubators | APP: STORM-Imager |

| FreeDCam | Full control over the cellphones camera: RAW and video image acquisition | FreeDCam |

| ImJoy Fiji.JS Learn2Sofi | Fiji.JS Plugin to process temporal stacks made by Wei @ ImJoy |

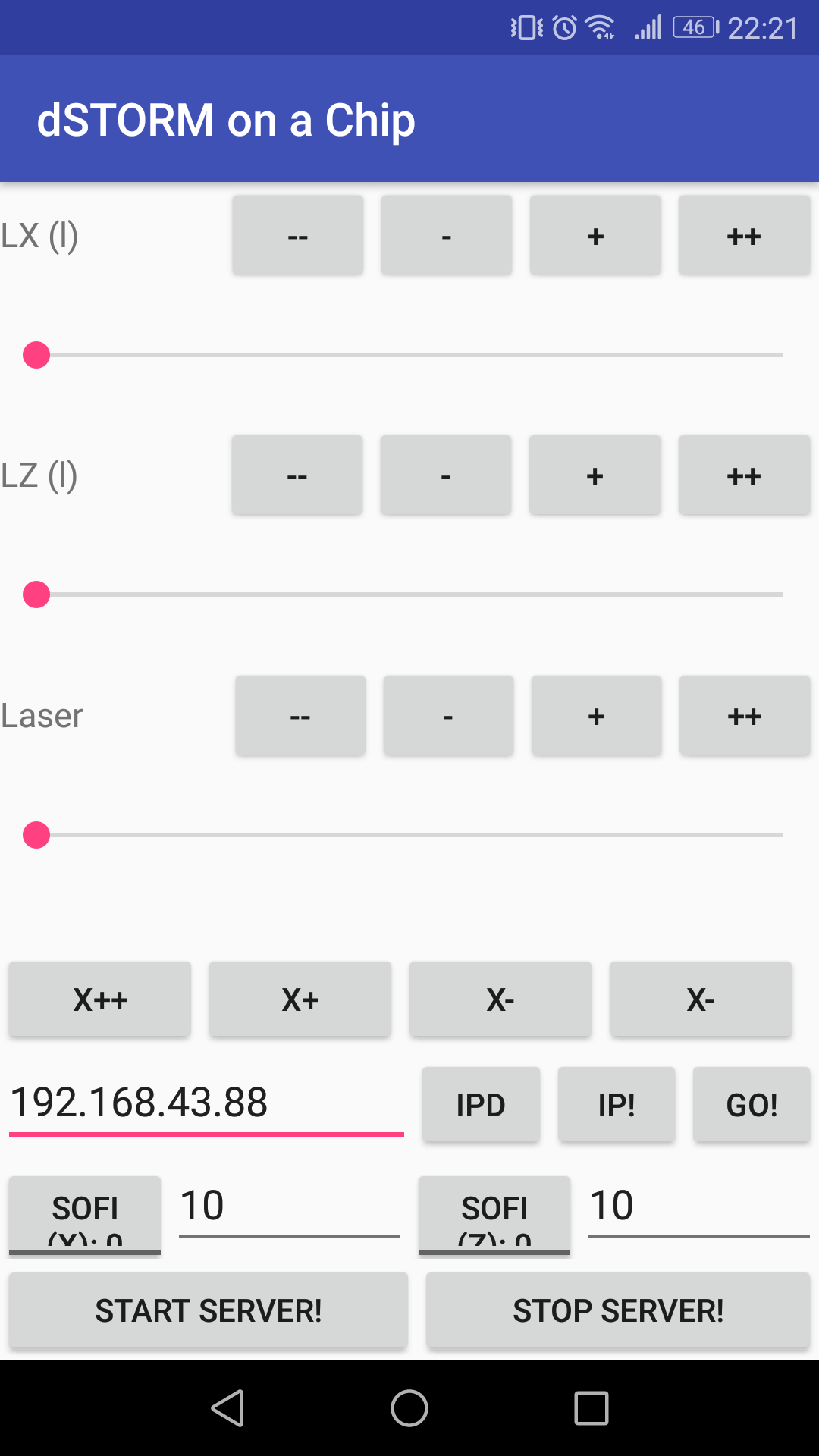

Android APP: STORM-Controler

To control the Lens or Laser using a customized MQTT controler APP, you can visit this repository:

This app allows basic hardware controls:

Android APP: STORM-Imager

This is the APP which can record images, control the device and predict a super-resolved result form the camera live stream. The APP can be found here:

Autofocus inside the APP:

It’s just an example how the cellphone maintains the focus. This is done by maximizing the focus metric (i.e. standard deviation over z) as a function of the focus motor position.

SOFI-based superresolution imaging inside the APP:

This is an example of the SOFI-based superresolution imaging using the neural network mentioned below. We used fixed e.coli labelled with ATTO 647. The fluctuation of the illumination is the result of the discrete mode pattern in the singlemode waveguide chip. The input field changes the intensity pattern.

Android APP: FreeDCam (cellSTORM module)

For the dSTORM experiments we used the open source APP FreeDCam originally developed and mainted by killerink. We provide a modified version for the Huawei P9 and P20 which is used in this work. It enables

- fast read-out and saving of cropped RAW frames to the SD-cad

- stream the RAW buffer to a c-server

To stream the data to a server this manual gives some more detailed information. It requires Android Studio which compiles the c program into an executable. Inside the APP you need to select the IP of the server.

Many thanks to @Killerink to make this work!

Settings for FreeDCam (Huawei P20 Pro)

We used the following settings:

Video Settings:

.jpg)

General Settings:

.jpg)

.jpg)

Acquisition Settings:

.jpg)

NN-based Super-Resolution Imaging: “Learn2Fluct”

The python and tensorflow-based neural network to use “SOFI”-like image reconstruction based on convLSTM2D layers on a cellphone can be found here:

It aims to transfer a stack of noisy low resolution images with varying illumination pattern:

into high resolution images:

It is part of the APP “STORM-Imager” and can be used as an interactive website (Live-Demo). To train the model have a look in the recent Tensorflow==2.3.0 implementation using Keras and TFLite which can be found in the Github-Reopository here.

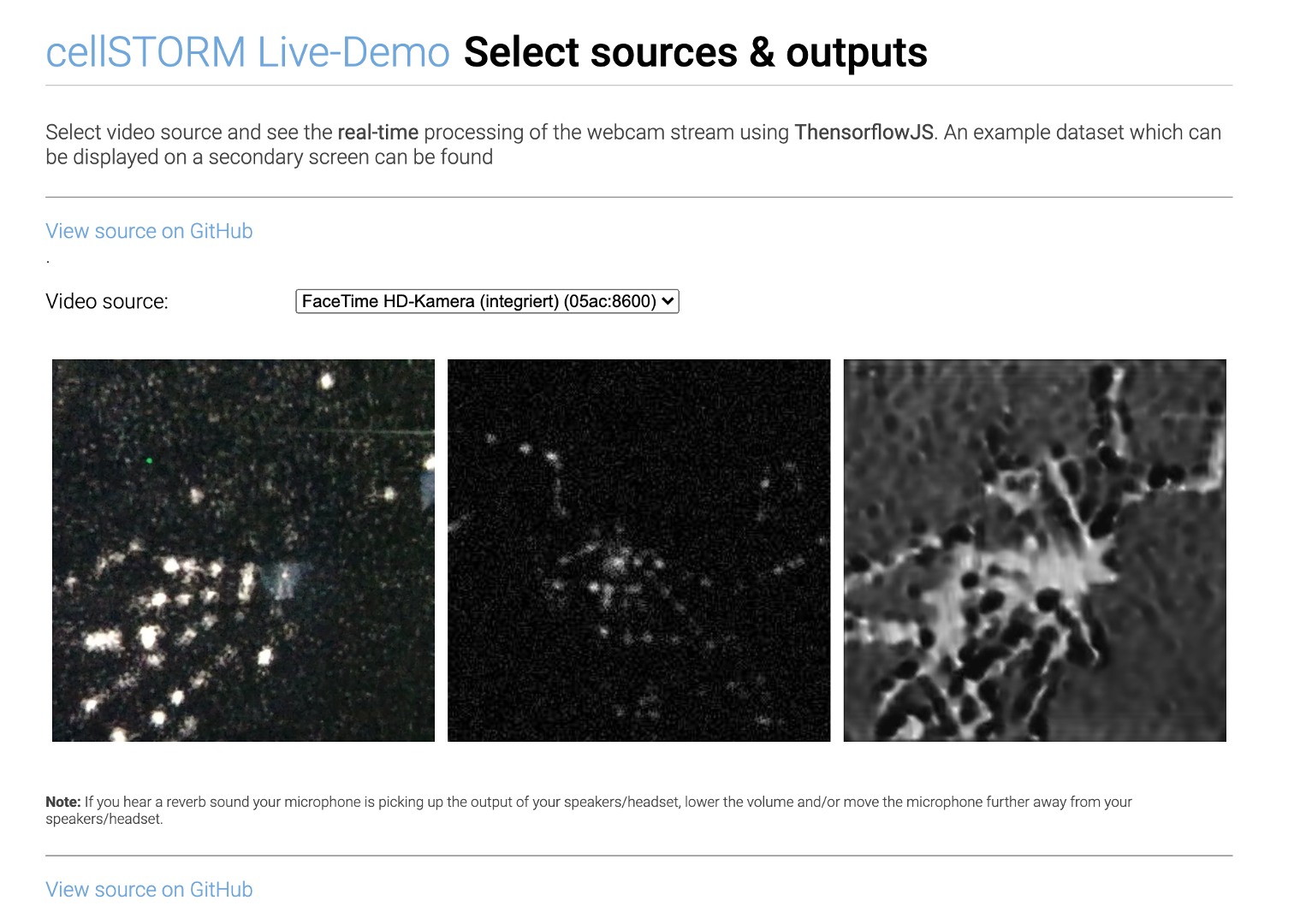

Live-Demo using Webcam

We implemented a live-demo of the pre-trained SOFI-network which is available here. It runs in Javascript and does not transfer any (!) data to the internet. It runs locally once the model of the neural network is downloaded.

Note: It’s in an experimental stage. It’s not optimized for performance. We do not guarantee for proper functionality. Use it on your own risk!

The model was tested on a Macbook Pro using a Chrome browser which runs at ~1fps.

ImJoy Plugin

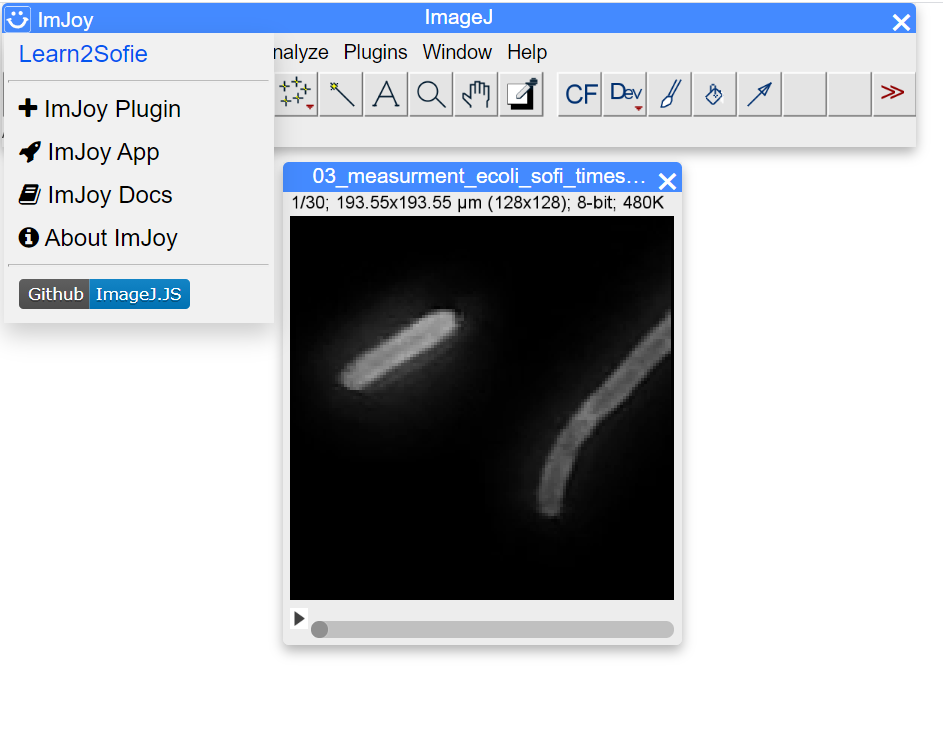

Wei made an awesome plugin for the browser-based FiJi which encapsulates the Javascript LiveDemo into a Fiji.JS applet. Very easy to use and beware: No installation necessary!!

Go to the ImJoy-Plugin Webpage

It will automatically load a sample TIF-Stack which you can find here

Starting the Plugin: Opening the plugin is as easy as selecting it from the ImJoy Icon on the left hand side:

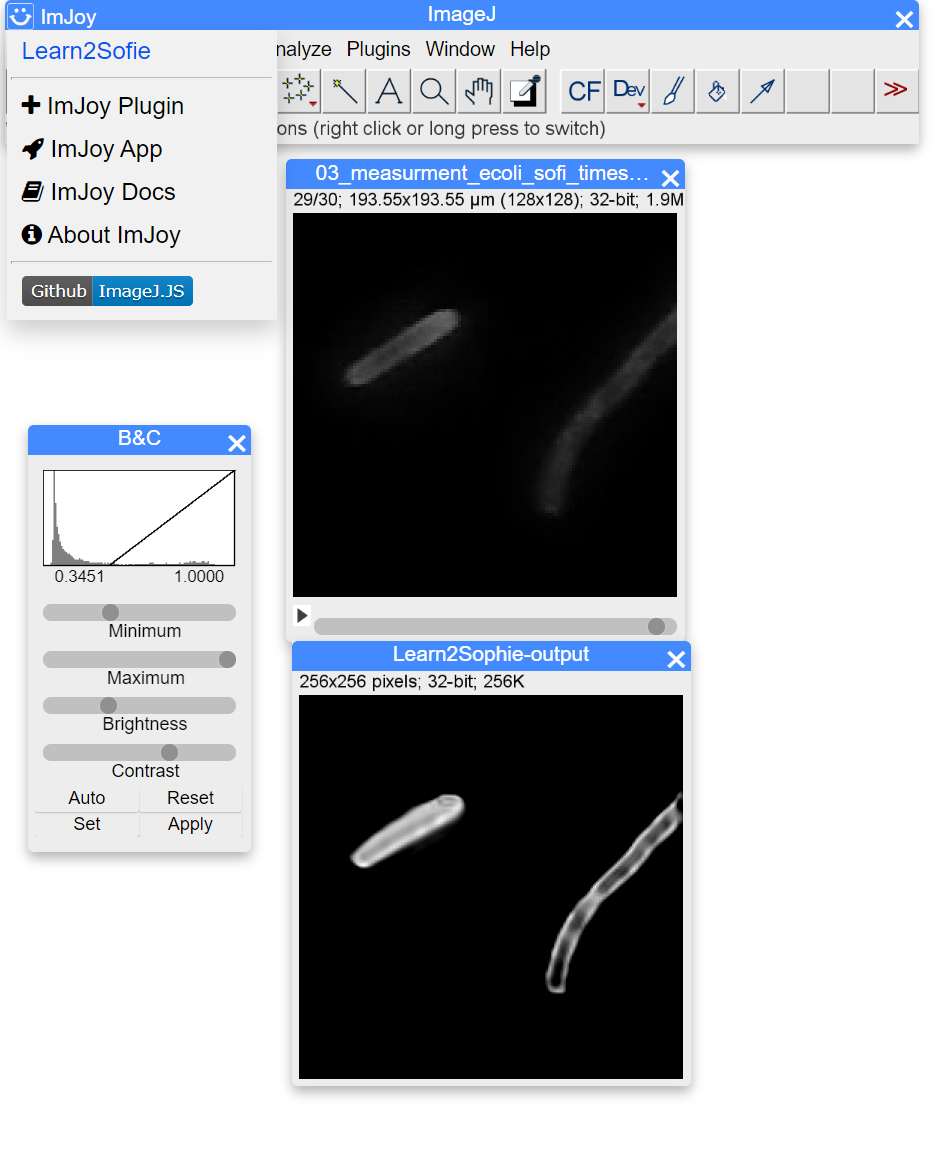

Executing the Plugin: Once you hit the Learn2SOFI button it will propagate the image stack through the network which looks like this after processing:

You can start playing with your own data by uploading you own time stack. It has to have the following dimensions: Nx=128, Ny=128, t=30

EnJoy! :-)

Google Colab

(1) Export Keras model (Tensorflow v2.2!) to Tensorflow Lite (Android)

Datasets

We provide many datasets in a publicly accessible repository. Please have a look at ZENODO [Comming Soon - need to wait for all data first]

Note: Not all the experiments are fully documented. If you need additional experimental parameters, please don’t hesitate to get back to us so that we can add this!

Tutorials

Video Tutorials to setup the cellSTORM device

cellSTORM - Part 1: Setup the MQTT server and connect the cellphone

https://www.youtube.com/watch?v=gefJPZ8_ua8&feature=youtu.be

cellSTORM - Part 2: Align the lens (OPU) and the laser

https://www.youtube.com/watch?v=GFoVPgfUFtI&feature=youtu.be

cellSTORM - Part 3: Adding the waveguide chip and start coupling

https://www.youtube.com/watch?v=-dWIeXHAiBc&feature=youtu.be

cellSTORM - Part 4: Setup the optical part

https://www.youtube.com/watch?v=qdbaAQTLw-c&feature=youtu.be

cellSTORM - Part 5: Setup the imaging using the cellphone

https://www.youtube.com/watch?v=fhmkS0Ywucg&feature=youtu.be

cellSTORM - Part 6: Setup the FreeDCam for best SNR performance

https://www.youtube.com/watch?v=Evdc-384KZk&feature=youtu.be

Contribute

If you have a question or found an error, please file an issue! We are happy to improve the device!

License

Please have a look into the dedicated License file.

Disclaimer

We do not give any guarantee for the proposed setup. Please use it at your own risk. Keep in mind that Laser source can be very harmful to your eye and your environemnt!